Backend infrastructure for AI solutions.

Pipelogic gives technical teams one platform to build, deploy, and manage AI apps. From reusable components to full production systems.

Built for Speed. Designed for Scale.

One platform to code, connect, deploy, and share — on any infrastructure.

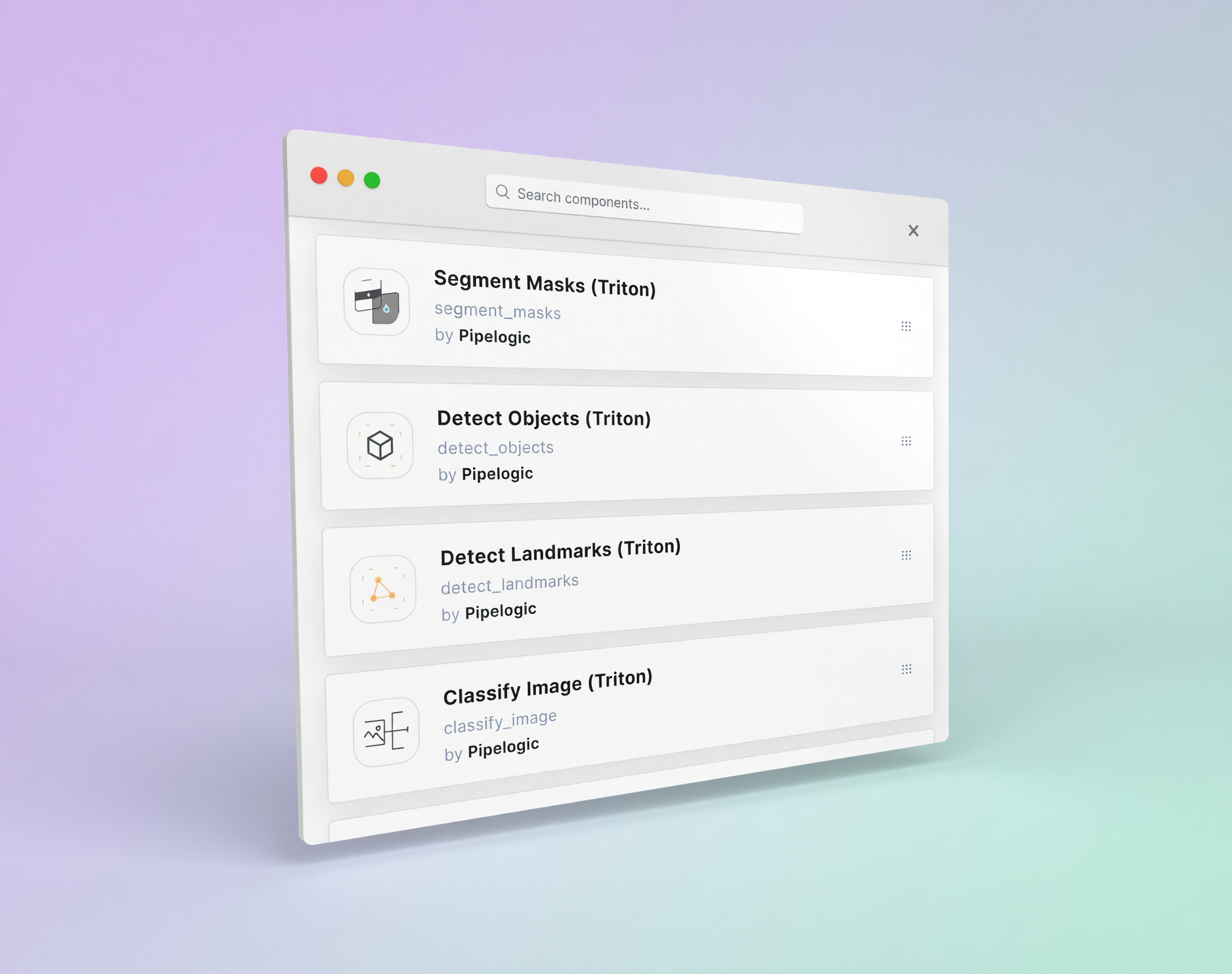

Build Components

Use Pipelogic’s CLI with your IDE to build components in Python, C, or C++. Bring your own libraries, package private logic, and combine it with public components to move faster. For inference, use your own code or connect supported engines exposed as services, including vLLM, Ollama, SGLang, Triton, and TorchServe

Read the docs

Build Backends

Drag and drop components to design backend logic visually. Pipelogic validates connections in real time so teams can catch incompatible data flows before deployment. Connect over HTTP, WebSocket, WebRTC, gRPC, RTSP/RTMP/HLS, Kafka, RabbitMQ, MQTT, S3/GCS, email, and messaging platforms such as Slack, Discord, Telegram, and WhatsApp.

Read the docsBuild Apps

Build interfaces in HTML, JavaScript, and CSS, or use frameworks such as React, Angular, Vue, and Svelte. Connect app inputs and outputs to Backends through standard APIs and streaming protocols. We primarily support web apps, but you can use Pipelogic as an AI backbone for desktop and mobile apps too.

Read the docs

Deploy Altogether

Deploy your solution from one workflow across Windows, Linux, or macOS; x86 or ARM; public cloud, private cloud, dedicated servers, on-prem, or fully air-gapped environments. Pipelogic handles orchestration, scaling, reliability, and performance so teams can stay focused on the product.

Read the docsShare Your Work

Create a public profile, showcase apps and components, and make it easy for others to try what you’ve built. Share open-source components and open-weight models publicly, or publish private unlisted apps for controlled access on eligible plans.

Read the docs

Built for the AI stack you already use

From inference servers and vision systems to multimodal sensors, libraries, operating systems, and hardware, Pipelogic integrates with the tools and infrastructure your team relies on.

FAQ

Find answers about setup, integrations, AI workflows, security, and getting the most out of the platform.

Yes. Components are isolated, so you can use your preferred tools and external libraries without affecting the rest of the system.

Today, Pipelogic supports Python, C, and C++.

A technical team should be able to build a simple first component quickly with the docs, starter examples, and onboarding flow.

Pipelogic supports structured data and real-time multimodal streams, including video, audio, thermal, and sensor data, across batch and streaming workflows.

It combines visual backend design with reusable components, low-latency data handling, and deployment workflows designed for real production environments.

There can be a small overhead, but the trade-off is easier scaling, easier updates, and better maintainability.

Pipelogic can run in public cloud, private cloud, on-prem, hybrid, and fully air-gapped environments.

Yes.

Data in transit is protected with TLS, stored data is encrypted at rest, and access is controlled through roles, keys, tokens, and deployment isolation.

Pipelogic supports role-based access control, API keys, OAuth 2.0, and enterprise directory integrations such as Active Directory and LDAP.

Yes.

Yes, where the underlying components and licenses allow it. Some proprietary third-party components may require separate licensing or migration support.

Pipelogic customer success team offers email support, community help via Discord, and enterprise-aligned support options such as WhatsApp and phone support.

Pipelogic is best positioned for technical teams building AI apps and workflows, especially where deployment, integration, and real-world data handling matter.

Pipelogic is self-served for getting started, sales-assisted for larger teams, and partner-led delivery.

Ready to Build?

Join technical teams using Pipelogic to build and deploy AI apps faster.